Polymorphic Malware: How AI Is Making It Harder to Detect

Table of contents

- What is an exploit and why does it matter?

- How did the traditional timeline work from discovery to weaponization?

- What is polymorphic malware?

- Why are traditional detection methods failing?

- 1. Signature-based detection

- 2. Behavioral analysis

- 3. Heuristic analysis

- How does AI supercharge polymorphic attacks?

- What is vibecoding and how does it lower the barrier for threat actors?

- How has the timeline collapsed from weeks to hours?

- What does this look like in real-world attacks?

- What happens when speed meets mutation?

- What must defenders do differently?

- 1. Detect breaches faster

- 2. Monitor for outcomes, not signatures

- 3. Leverage AI defensively

- 4. Shift to proactive external monitoring

- How can organizations stay ahead?

AI isn’t just generating chatbot responses and images, it’s generating undetectable malware. Polymorphic exploits powered by large language models are now mutating faster than security teams can respond, collapsing the traditional timeline from vulnerability discovery to widespread attack from weeks to hours.

In this blog, we’ll explore two trending attack patterns: the rise of AI-generated polymorphic exploits and the dramatic reduction in time-to-exploit, explaining why traditional security approaches are struggling to keep pace.

What is an exploit and why does it matter?

An exploit is a piece of code or technique that takes advantage of a vulnerability, a flaw or weakness in software or systems. The vulnerability is the unlocked window; the exploit is climbing through it.

When security researchers discover a vulnerability, they publish a Proof of Concept (PoC), a demonstration that the vulnerability exists and can be exploited. This PoC is usually basic, showing that exploitation is possible but not providing a fully formed attack vector.

How did the traditional timeline work from discovery to weaponization?

Historically, there was a natural buffer between vulnerability disclosure and widespread attacks:

- Discovery: A vulnerability is found and reported

- PoC release: A proof of concept demonstrates the flaw

- Patch development: Vendors create fixes

- Weaponization: Skilled attackers develop functional exploit tools

- Script kiddie adoption: Automated tools make attacks accessible to less skilled individuals

The term “script kiddie” refers to individuals who use pre-made hacking tools without understanding how they work. They rely on tools built by more sophisticated attackers.

This timeline traditionally gave organizations weeks or even months to patch systems before widespread exploitation began. The weaponization phase, turning a PoC into a reliable, automated attack tool, required significant programming skill and time.

What is polymorphic malware?

Polymorphic malware is malicious software that constantly changes its code while maintaining its core functionality. It works by modifying its code signature each time it spreads or executes, making it appear as a completely different program to security tools.

According to CISA’s cybersecurity alerts, polymorphic variants now account for a growing percentage of malware detected in enterprise environments.

Why are traditional detection methods failing?

Antivirus software and Endpoint Detection and Response (EDR) solutions have traditionally relied on three main approaches:

1. Signature-based detection

Security tools maintain databases of known malware “signatures,” unique patterns of code that identify specific threats. When a file is scanned, its code is compared against this database.

Limitation: If the code changes, the signature no longer matches. Polymorphic malware exploits this by generating new signatures with each iteration.

2. Behavioral analysis

More advanced tools monitor how programs behave rather than just examining their code. Suspicious behaviors, like accessing sensitive files or making unusual network connections, trigger alerts.

Limitation: AI can now generate malware that mimics legitimate software behavior or varies its behavioral patterns enough to avoid detection rules.

3. Heuristic analysis

Security tools use rules and algorithms to identify potentially malicious code based on suspicious characteristics, even without a known signature.

Limitation: AI-generated polymorphic code can be designed to avoid triggering these heuristic rules while still achieving malicious objectives.

How does AI supercharge polymorphic attacks?

Traditional polymorphic malware used relatively simple techniques: basic encryption, code substitution, or instruction reordering. The variations, while numerous, followed predictable patterns that security researchers could eventually identify.

AI changes this equation dramatically.

LLMs and code-generation AI can now create functionally equivalent code that looks entirely different each time. Instead of simple obfuscation, AI can:

- Rewrite exploit logic using different algorithms

- Generate unique variable names, function structures, and code flow

- Adapt code style to mimic legitimate software

- Create variations that evade specific detection rules

Each generated version is truly unique, not just scrambled. This makes signature-based detection nearly impossible and significantly complicates behavioral and heuristic analysis.

The FBI’s Internet Crime Complaint Center (IC3) has reported a surge in AI-assisted cybercrimes, with attackers leveraging these capabilities to evade traditional defenses.

What is vibecoding and how does it lower the barrier for threat actors?

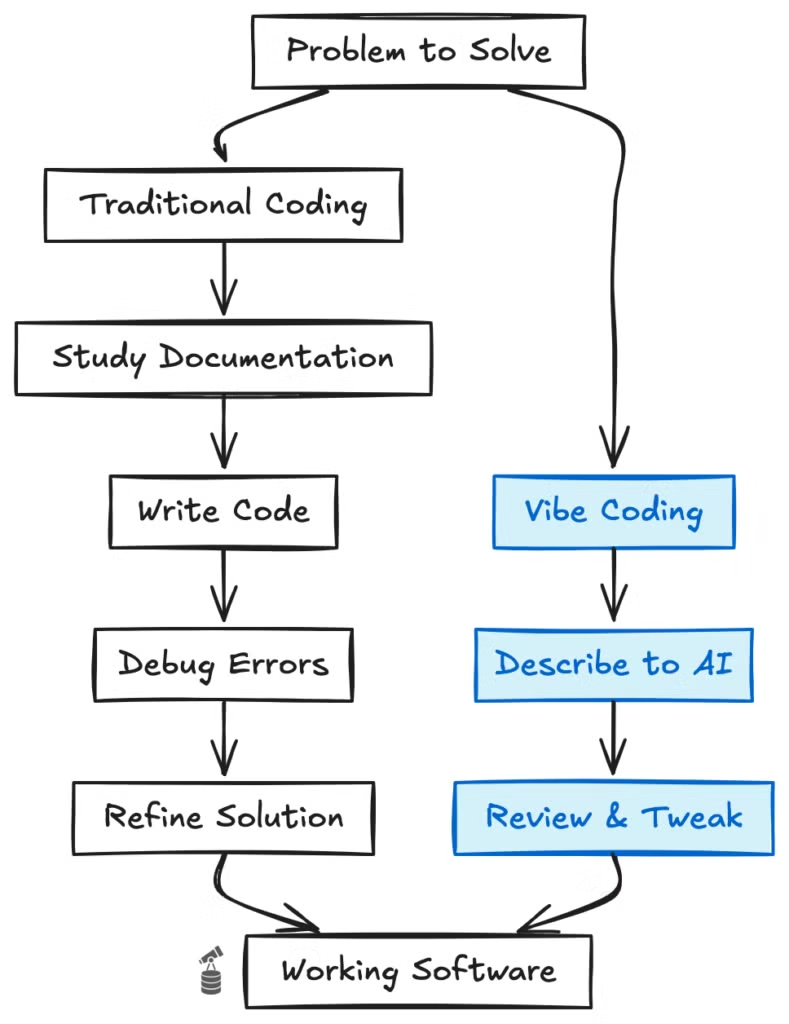

“Vibecoding” describes the practice of using AI assistants to write code through natural language conversation. Instead of writing code line by line, developers describe what they want, and AI generates the code.

For legitimate developers, this accelerates software creation. For malicious actors, it eliminates the technical barrier to weaponizing exploits.

How has the timeline collapsed from weeks to hours?

The traditional weaponization process required:

- A skilled attacker to understand the vulnerability deeply

- Writing reliable exploit code from scratch

- Testing across different environments

- Building automation and evasion capabilities

This required advanced programming knowledge and could take weeks or months.

With AI-assisted development:

- An attacker describes the vulnerability to an AI

- The AI generates functional exploit code

- The attacker requests modifications for different targets

- Automation and obfuscation are added through simple prompts

What once required elite skills now requires only the ability to describe objectives clearly. The time from PoC to weaponized tools has collapsed from weeks to hours or even minutes.

What does this look like in real-world attacks?

Recent incidents demonstrate the severity of AI-powered polymorphic threats:

- Financial sector targeting: Attackers deployed AI-generated variants of banking trojans that mutated every 15 minutes, evading signature-based detection across multiple financial institutions.

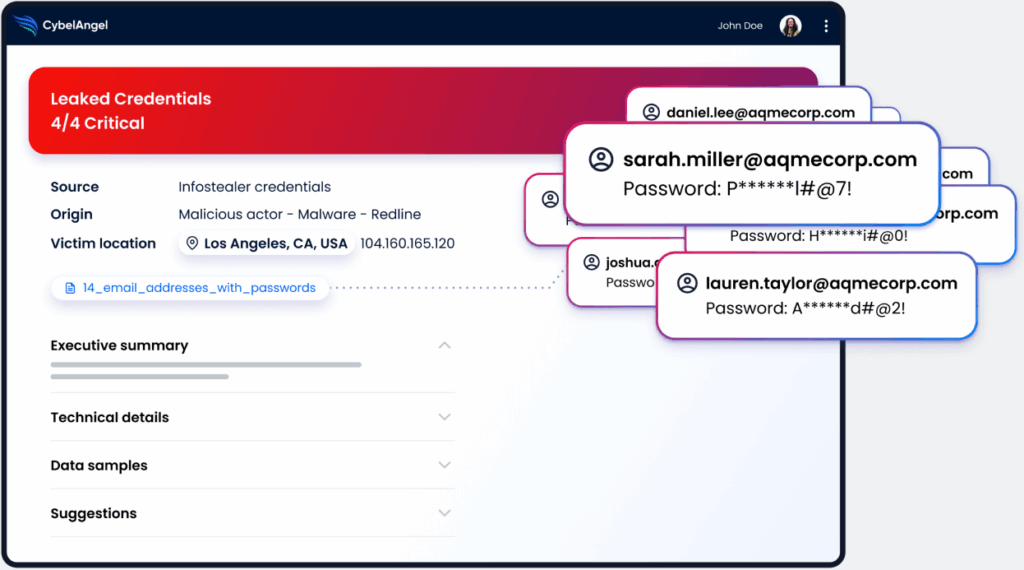

- Credential harvesting at scale: Cybercriminals used AI-generated phishing campaigns combined with polymorphic infostealers to compromise thousands of corporate credentials, later sold on dark web marketplaces.

- Supply chain exploitation: Threat actors weaponized a publicly disclosed vulnerability within 6 hours using AI code generation, targeting software supply chains before patches could be deployed.

What happens when speed meets mutation?

These two trends, AI-powered polymorphism and accelerated development, compound each other:

- Exploits are weaponized faster than ever

- Each deployment uses unique, AI-generated code

- By the time security tools identify one variant, thousands more exist

- Traditional detection approaches cannot keep pace

The NIST Cybersecurity Framework now emphasizes the need for adaptive, AI-powered defenses to match the sophistication of modern threats.

What must defenders do differently?

The assumption that organizations have time to analyze threats and deploy protections no longer holds. When attackers can weaponize vulnerabilities in hours and generate infinite unique variants, defenders must:

1. Detect breaches faster

The window between compromise and detection must shrink dramatically. Every hour of undetected breach means more data exfiltrated, more systems compromised.

2. Monitor for outcomes, not signatures

Instead of trying to identify every possible attack variant, focus on detecting the results of successful attacks: data appearing where it shouldn’t, credentials being misused, unauthorized access patterns.

3. Leverage AI defensively

Use the same AI capabilities to analyze threats, identify patterns across seemingly unrelated incidents, and predict attack evolution.

4. Shift to proactive external monitoring

Rather than waiting for attacks to reach internal systems, monitor external sources for signs of compromise: leaked credentials, stolen data appearing on dark web markets, early indicators of targeting.

According to CISA’s Known Exploited Vulnerabilities Catalog, the median time to exploitation for new vulnerabilities has decreased by 73% since 2020.

How can organizations stay ahead?

Traditional detection methods, signature matching, behavioral rules, and heuristic analysis, were designed for a slower, more predictable environment. Today’s defenders face adversaries who can generate infinite unique attack variants and weaponize new vulnerabilities in hours rather than weeks. Success in this new landscape requires:

- Matching AI capabilities with AI-powered defense

- Dramatically reducing detection times

- Extending visibility beyond the traditional perimeter to catch breaches at the earliest possible moment

- Monitoring the external attack surface for compromised credentials and leaked data before attackers can weaponize them

CybelAngel’s platform combines AI-powered threat detection with external monitoring across the surface, deep, and dark web to identify threats before they reach your perimeter.