How AI-assisted Development is Fueling a new Wave of Public Repository Data Leaks

Table of contents

Large language models have made coding accessible to virtually everyone. Sales representatives, HR operations managers, customer success teams, anyone with a clear idea and a prompt can now produce working software in minutes. That democratization is genuinely exciting.

But it is also creating one of the fastest-growing data breach vectors we have seen in years: sensitive corporate data leaking through publicly accessible code repositories on platforms like GitHub and GitLab.

Linked media to your previous article here

What changed with AI in enterprise software development

Until recently, software development had a natural access barrier: you needed to know how to write code. That constraint served as an informal gatekeeper for certain security practices. Engineers who had spent years in the craft understood concepts like secrets management, environment variable hygiene, API key rotation, and repository visibility.

LLMs have effectively eliminated that barrier. For experienced engineers, this is a powerful accelerator, they bring architectural knowledge and security intuition to guide the model toward high-quality outputs. But LLMs do not just amplify what experienced developers already do. They enable a completely new population of code authors: people who have never written a line of code professionally and have no frame of reference for what secure software looks like.

The rise of the accidental developer

The profile of the person pushing code to a repository has changed. Across organizations, we are now seeing sales teams building prospecting automations, customer success managers writing scripts to process client data, HR professionals automating reporting workflows, and finance teams creating utilities to transform financial data.

These users are not malicious. They are resourceful. The problem is that they are operating entirely outside the software delivery pipeline, with no visibility into architecture standards, no access to internal secrets management systems, and no training in secure coding practices. The same dynamic that drives account takeover attacks through stolen credentials is now playing out in code repositories, access obtained not through attack, but through oversight.

Why LLM-generated code is functionally strong but structurally risky

LLMs are good at generating code that solves the stated problem. What the model optimizes for is functional correctness. What it does not naturally optimize for are the structural constraints experienced engineers take for granted: credentials hardcoded in the source rather than injected from a secrets vault, personal API tokens used instead of service accounts, no separation between configuration and logic, and code pushed directly to a public repository because it was the easiest starting point.

The model does exactly what it was asked. The problem is that the person asking often did not know to ask for security. And once that code, complete with hardcoded API keys, authentication tokens, or sensitive customer data, is committed to a public repository, the clock starts ticking.

Why public repositories have become a critical exposure vector

GitHub and GitLab are the natural home for code, even for someone who has never heard of version control. LLMs routinely suggest creating a repository as a first step, and the default for a personal or exploratory project is often public.

Public repositories are indexed by search engines, crawled by automated scanners, and monitored by threat actors specifically looking for leaked credentials. The exposure window can be extraordinarily short: a secret committed and then deleted is still present in the repository’s git history and may already have been captured by a scanner. GitHub’s native secret scanning is only effective when developers operate within managed workflows, a condition that increasingly does not hold for non-engineering code authors.

What kinds of sensitive data end up exposed

Based on what CybelAngel detects across client organizations, the most commonly exposed data includes API keys and authentication tokens for cloud providers (AWS, GCP, Azure) and internal services, database connection strings including hostnames and credentials, private keys and certificates, internal configuration files exposing network topology, sensitive business data included in test fixtures, and credentials for third-party platforms including CRM systems, HR tools, and payment processors.

Many of these exposures are not the result of a sophisticated attack. They are functional code that was never designed with security in mind, written by someone who did not know it was a problem.

What CybelAngel is seeing: a measurable surge in high-severity findings

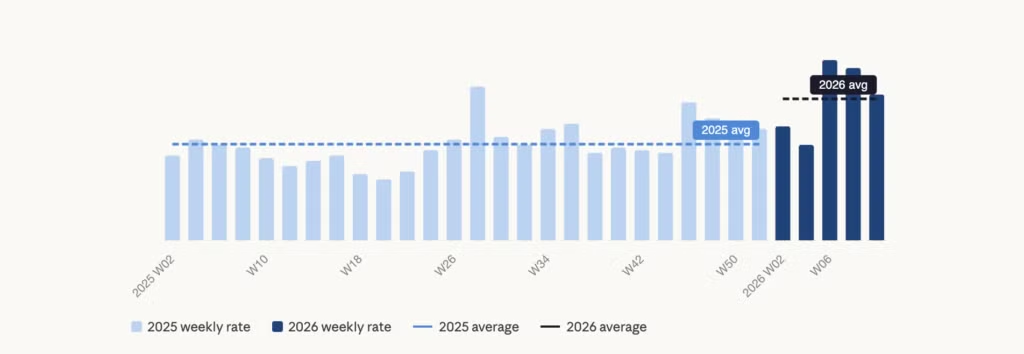

The trend is not anecdotal. CybelAngel has been monitoring external data exposures, including public code repositories, for clients for nearly a decade. Over the past two years, we have seen a consistent increase in security reports at severity level 3 (High) and level 4 (Critical) directly linked to open-source repositories containing confidential client information.

Our weekly reports rate shows a clear upward trend from late 2025 into early 2026, with Q1 2026 peaks representing the highest volumes we have measured. The proportion of findings attributable to public code repositories has grown substantially, driven directly by LLM-generated code being pushed to public platforms.

This is not a slow drift. It is an acceleration. Unlike credential theft through phishing or malware, these exposures do not require an adversary to actively target an organization. They are discovered passively, by automated scanners indexing public repositories at scale. By the time a security team notices the exposure, it has likely already been seen.

What organizations must do differently

The traditional perimeter for managing code security, keeping it within the engineering team, enforcing policies through CI/CD pipelines, requiring code reviews, no longer covers the full population of people writing and publishing code.

There is also a critical distinction worth making: scanning for secrets (API keys, tokens, credentials with a recognizable format) is a capability most major code hosting platforms now support natively. But detecting whether a repository contains genuinely sensitive information, internal documents, customer data, confidential business logic, is a fundamentally harder problem that platform-native tools are not built to solve. Closing that gap requires a different class of external attack surface monitoring.

Extend security awareness beyond engineering

Security training needs to reach non-engineering roles. If your sales team is building automations that connect to internal systems, they need, at minimum, to understand why putting credentials in a script is a problem and what the correct alternative is.

Deploy secrets scanning across all repositories

Tools like GitHub Advanced Security and GitGuardian can detect secrets being committed in real time. These tools are valuable but limited to structured secrets with recognizable patterns. They are not designed to identify customer email lists, hardcoded internal pricing models, or confidential business documents. Secrets scanning is a necessary baseline. It is not sufficient on its own.

Expand your perimeter to include public code platforms

Threat actors search public platforms for content that references your organization: domain names, internal system identifiers, branded keywords, proprietary data patterns. Your security team needs visibility into what exists publicly, not just what you know about. This is where EASM becomes essential, a continuous, automated capability to discover and monitor your organization’s exposure across the open internet, including public code repositories.

Move from reactive to proactive detection

Discovering a leak weeks after it occurred is better than never, but the window of risk is already open. Proactive monitoring combined with rapid notification is what shrinks the gap between exposure and remediation. That requires the ability to identify sensitive business information in unstructured code and files, deep contextual analysis, not pattern matching.

FAQs

No. Git retains the full commit history, including deleted files. A secret pushed to a public repository and then deleted is still accessible in the git log and may already have been captured by automated scanners that index repositories continuously. The only safe assumption once a credential has been committed publicly is that it has been seen. Rotate it immediately.

Yes, and at growing scale. LLMs generate repository setup as a default first step for most project types, and personal GitHub accounts default to public visibility. CybelAngel is detecting a measurable increase in high-severity findings linked to repositories created outside engineering teams, across sales, operations, finance, and HR functions.

Partly. GitHub’s secret scanning detects credentials with recognizable formats, API key patterns, tokens, and connection strings, when developers are operating within GitHub’s managed workflows. It does not detect sensitive business data in unstructured files, internal documents committed as test fixtures, or confidential logic embedded in scripts. Platform-native tools address a subset of the risk. External monitoring covers the rest.

CybelAngel scans public code repositories continuously. When content linked to a client organization surfaces, including domain names, internal identifiers, or sensitive data patterns, we alert the client with a verified finding and remediation guidance. The goal is to close the gap between when an exposure occurs and when your security team knows about it.

The velocity of this problem will only increase. As LLMs become more embedded in everyday workflows, the volume of code generated and published by non-engineers will keep growing.

At CybelAngel, we continuously monitor public code repositories, cloud storage, dark web forums, and hundreds of other external sources for content that should not be public. When something surfaces that belongs to one of our clients, we alert them fast, with context and with clear guidance for remediation.

The democratization of coding is a positive development. Keeping your security posture in step with it means extending your visibility beyond the walls you built when only engineers wrote code.

See what your repositories are exposing.